Crawlkit

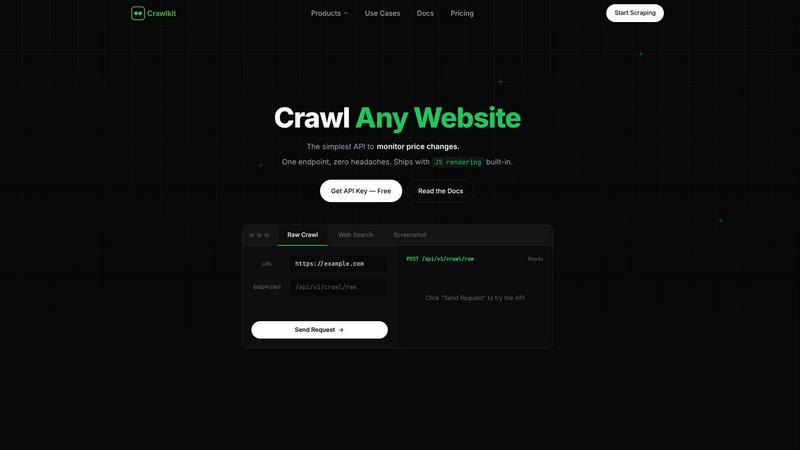

CrawlKit is a unified API platform that turns any website into structured data for enterprise automation and insights.

Visit

About Crawlkit

CrawlKit is an enterprise-grade web data extraction platform engineered to transform the web into a structured, reliable API. It is specifically designed for developers, data engineers, and business intelligence teams who require scalable, high-fidelity access to web data without the operational overhead of building and maintaining complex scraping infrastructure. The platform directly addresses critical challenges in modern data collection, including anti-bot protections, IP blocking, JavaScript rendering, and rate limiting. By providing a unified API, CrawlKit abstracts these complexities, handling proxy rotation, headless browser automation, intelligent retries, and platform-specific parsing. This allows organizations to shift resources from data collection to core data analysis and application development, accelerating time-to-insight and improving ROI on data initiatives. Its core value proposition is delivering clean, structured data from any public website or platform—including LinkedIn, Instagram, and major app stores—with a single, consistent API call, enabling the rapid construction of powerful, production-ready data pipelines.

Features of Crawlkit

Unified API for Diverse Data Sources

CrawlKit provides a single, consistent API endpoint to extract structured data from a vast array of sources. Whether you need professional profiles from LinkedIn, social metrics from Instagram, search engine results, or app reviews from the Play Store and App Store, the interface remains the same. This eliminates the need to integrate and manage multiple specialized scraping tools, streamlining development and reducing maintenance costs for data teams building comprehensive datasets.

Managed Infrastructure & Anti-Block Handling

The platform's robust architecture fully manages the technical burdens of reliable web scraping. It automatically handles rotating residential proxies, executes JavaScript with headless browsers, circumvents anti-bot protections, and respects rate limits with intelligent request pacing. This ensures high success rates and data completeness, freeing your team from the constant firefighting associated with IP bans and CAPTCHAs, and guaranteeing a steady flow of data for critical business processes.

Transparent, Credit-Based Pricing

CrawlKit operates on a simple, pay-as-you-go credit system with no monthly commitments or hidden fees. Each API call consumes a known number of credits, and credits never expire. The platform offers volume discounts and provides clear cost visibility per request. Crucially, it offers refunds on failed calls, ensuring you only pay for successful, usable data extraction, which aligns costs directly with value received and simplifies budget forecasting.

Enterprise-Grade Reliability & Data Quality

CrawlKit is built for production environments. It ensures data quality by waiting for full page loads and validating responses before delivery, preventing partial or broken data from entering your pipelines. The service is designed for high availability and scales seamlessly with your needs. It integrates effortlessly with any programming language or platform, including Node.js, AWS, and Cloud Run, ensuring no vendor lock-in and fitting into existing technology stacks.

Use Cases of Crawlkit

CRM and Sales Intelligence Enrichment

Automatically enrich lead and contact records in your CRM with verified professional data. By pulling structured information from LinkedIn profiles and company pages—such as job titles, current company, experience, and skills—sales teams can prioritize leads, personalize outreach, and gain deeper account insights without manual research, boosting sales productivity and conversion rates.

Competitive and Market Intelligence

Systematically monitor competitors' digital footprints. Track product updates and customer sentiment via app store reviews, analyze marketing performance through Instagram engagement metrics, and gather intelligence from public company data on LinkedIn. This enables data-driven strategy, helping you identify market gaps, benchmark performance, and react swiftly to competitive moves.

Social Media Monitoring and Analytics

Build dashboards to track brand health and campaign performance across social platforms. Extract public data from Instagram to monitor follower growth, post engagement rates, and content trends for your brand or competitors. This provides actionable insights for marketing teams to optimize content strategy and measure ROI on social media initiatives effectively.

App Review and Product Feedback Analysis

Aggregate and analyze user reviews from the Google Play Store and Apple App Store at scale. Extract ratings, review text, and version data to perform sentiment analysis, identify recurring issues, and track feature requests. This empowers product managers to make informed development decisions, improve user satisfaction, and quickly address critical bugs reported by users.

Frequently Asked Questions

What platforms and data sources does CrawlKit support?

CrawlKit supports a wide and growing range of data sources through dedicated API endpoints. This includes professional networks like LinkedIn (for profiles, companies, jobs), social media like Instagram (profiles, posts), search engines, and app stores (Play Store, App Store). The platform is continually expanding, and they encourage users to request new sources, often building custom APIs to meet specific enterprise needs.

How does CrawlKit handle websites with anti-bot protections?

CrawlKit's infrastructure is specifically engineered to bypass sophisticated anti-bot measures. It utilizes a global network of rotating residential proxies to mimic organic user traffic, employs full JavaScript rendering with headless browsers to load dynamic content, and implements advanced logic to solve common challenges like CAPTCHAs. This managed approach ensures high data retrieval success rates without requiring any custom engineering from your team.

What is your pricing model and are there any minimums?

CrawlKit uses a transparent, credit-based pricing model with no monthly subscriptions or minimum spend requirements. You purchase credits upfront, and each API call consumes a set number (e.g., 1 credit for an Instagram profile, 2 for a LinkedIn company). Credits never expire, and you receive refunds for failed requests. Volume discounts are available, making it cost-effective to scale your data operations.

How do I get started and is there a free tier?

You can start immediately by signing up for a free account, which includes 100 complimentary credits to test the API. Simply install the official SDK (e.g., via npm for Node.js) or use direct HTTP requests, configure your API key, and begin making calls to supported endpoints. The playground and comprehensive documentation allow you to experiment and integrate CrawlKit into your data pipelines within minutes.

Explore more in this category:

Top Alternatives to Crawlkit

TrafficClaw

Talk to your SEO & Analytics data - it finally talks back

Formtorch

Formtorch enables developers to manage form submissions effortlessly, eliminating the need for backend setup and streamlining workflow automation.

Fusedash

Fusedash transforms raw data into actionable dashboards and reports to accelerate team productivity.

Idearium

Idearium builds high-converting websites that drive measurable business growth and ROI.

Linkfinder AI

LinkFinder AI instantly enriches leads with complete company data to accelerate sales and boost productivity.

BlitzAPI

BlitzAPI delivers clean B2B data via powerful APIs to automate and scale your GTM playbooks.

echoloc

Echoloc turns job posts into actionable buying signals for sales teams to target ready-to-purchase accounts.

FilexHost

Effortlessly host and share any file with a secure, shareable link in seconds using FilexHost's intuitive drag-and-drop.