Best Boilerplates vs Crawlkit

Side-by-side comparison to help you choose the right AI tool.

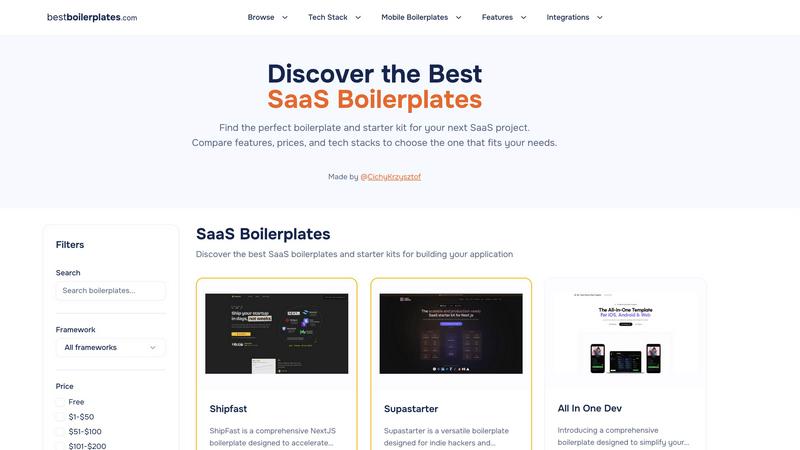

Best Boilerplates

Best Boilerplates accelerates SaaS launches by comparing tailored features, pricing, and tech stacks.

Last updated: March 1, 2026

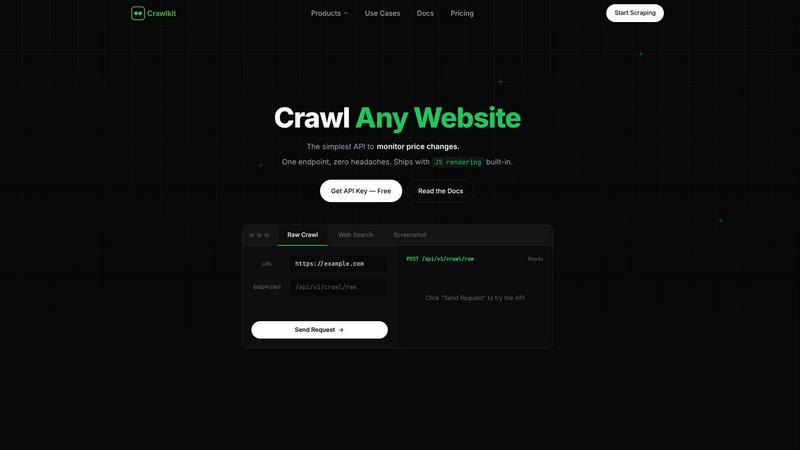

Crawlkit

CrawlKit is a unified API platform that turns any website into structured data for enterprise automation and insights.

Last updated: February 28, 2026

Visual Comparison

Best Boilerplates

Crawlkit

Feature Comparison

Best Boilerplates

Curated Comparison Engine

The platform's core functionality is its sophisticated comparison engine, which standardizes the evaluation of disparate boilerplate products. It presents side-by-side analyses of critical technical and commercial factors such as licensing costs, included integrations, supported authentication providers, database options, and code quality assessments. This feature empowers technical decision-makers to conduct due diligence efficiently, comparing apples-to-apples across dozens of options to identify the solution that offers the highest value and best fit for their specific stack and business requirements.

Advanced Filtering & Discovery

Best Boilerplates offers granular filtering capabilities that allow users to drill down precisely to the tools that match their project specifications. Filters span technology stack (framework), price range, and, most critically, specific required features like payment processors (Stripe, Paddle), multi-tenancy support, admin panels, CMS, or SEO tooling. This targeted discovery process dramatically reduces research overhead, ensuring developers and founders only spend time evaluating boilerplates that are technically compatible and functionally comprehensive for their intended application.

In-Depth Technical Audits

Beyond basic feature lists, each boilerplate listing includes a detailed technical audit and review. These assessments provide insights into code structure, scalability considerations, update frequency, and real-world reliability. This depth of analysis mitigates the significant risk of adopting a poorly architected foundation, which can lead to costly refactoring down the line. It provides the confidence needed for enterprise and startup teams to commit to a technology foundation that supports sustainable growth.

Vendor-Neutral Resource Hub

The platform maintains a vendor-neutral stance, showcasing both commercial and open-source starter kits. This objectivity ensures that recommendations are based on merit and fit rather than promotional influence. It serves as a trusted, centralized resource where teams can discover everything from premium, all-in-one solutions like ShipFast to highly specialized or open-source alternatives, ensuring every project profile—from indie hackathons to large-scale SaaS deployments—finds an optimal starting point.

Crawlkit

Unified API for Diverse Data Sources

CrawlKit provides a single, consistent API endpoint to extract structured data from a vast array of sources. Whether you need professional profiles from LinkedIn, social metrics from Instagram, search engine results, or app reviews from the Play Store and App Store, the interface remains the same. This eliminates the need to integrate and manage multiple specialized scraping tools, streamlining development and reducing maintenance costs for data teams building comprehensive datasets.

Managed Infrastructure & Anti-Block Handling

The platform's robust architecture fully manages the technical burdens of reliable web scraping. It automatically handles rotating residential proxies, executes JavaScript with headless browsers, circumvents anti-bot protections, and respects rate limits with intelligent request pacing. This ensures high success rates and data completeness, freeing your team from the constant firefighting associated with IP bans and CAPTCHAs, and guaranteeing a steady flow of data for critical business processes.

Transparent, Credit-Based Pricing

CrawlKit operates on a simple, pay-as-you-go credit system with no monthly commitments or hidden fees. Each API call consumes a known number of credits, and credits never expire. The platform offers volume discounts and provides clear cost visibility per request. Crucially, it offers refunds on failed calls, ensuring you only pay for successful, usable data extraction, which aligns costs directly with value received and simplifies budget forecasting.

Enterprise-Grade Reliability & Data Quality

CrawlKit is built for production environments. It ensures data quality by waiting for full page loads and validating responses before delivery, preventing partial or broken data from entering your pipelines. The service is designed for high availability and scales seamlessly with your needs. It integrates effortlessly with any programming language or platform, including Node.js, AWS, and Cloud Run, ensuring no vendor lock-in and fitting into existing technology stacks.

Use Cases

Best Boilerplates

Accelerating Startup MVP Launch

For startup founders and indie hackers operating with constrained resources and timelines, Best Boilerplates is indispensable for accelerating the MVP launch. By quickly identifying a boilerplate with pre-integrated user authentication, subscription billing, and a responsive UI, teams can bypass months of initial development. This allows them to validate their core business hypothesis with real users and begin generating revenue in a fraction of the time, providing a crucial competitive advantage and preserving runway.

Standardizing Enterprise Development Workflows

Within larger organizations or agencies, Best Boilerplates aids in standardizing technology selection for new client projects or internal tools. Technical leads can use the platform to establish approved, vetted boilerplates for common project types (e.g., SaaS dashboards, internal CRMs). This standardization improves team onboarding, ensures consistency in code quality and security practices, and reduces the maintenance burden across multiple projects, leading to higher overall team productivity and lower total cost of ownership.

Evaluating Technology Stack Migration

When a development team is considering a migration to a new framework or stack (e.g., moving from a monolithic setup to a modern React/Next.js architecture), Best Boilerplates provides a clear view of the available starter kits in that ecosystem. Teams can assess the maturity of available tools, the completeness of integrations, and community adoption to de-risk the migration strategy and select a foundation that will streamline the transition rather than complicate it.

Sourcing Production-Ready Templates for Specific Verticals

Developers building for specific verticals—such as AI-powered applications, marketplaces, or mobile apps—can leverage the platform's categorization to find specialized boilerplates. Instead of building complex features like real-time notifications, team management, or in-app purchase handling from scratch, they can select a template where these features are already production-tested and integrated, ensuring robustness and allowing the team to focus on building unique, differentiating functionality.

Crawlkit

CRM and Sales Intelligence Enrichment

Automatically enrich lead and contact records in your CRM with verified professional data. By pulling structured information from LinkedIn profiles and company pages—such as job titles, current company, experience, and skills—sales teams can prioritize leads, personalize outreach, and gain deeper account insights without manual research, boosting sales productivity and conversion rates.

Competitive and Market Intelligence

Systematically monitor competitors' digital footprints. Track product updates and customer sentiment via app store reviews, analyze marketing performance through Instagram engagement metrics, and gather intelligence from public company data on LinkedIn. This enables data-driven strategy, helping you identify market gaps, benchmark performance, and react swiftly to competitive moves.

Social Media Monitoring and Analytics

Build dashboards to track brand health and campaign performance across social platforms. Extract public data from Instagram to monitor follower growth, post engagement rates, and content trends for your brand or competitors. This provides actionable insights for marketing teams to optimize content strategy and measure ROI on social media initiatives effectively.

App Review and Product Feedback Analysis

Aggregate and analyze user reviews from the Google Play Store and Apple App Store at scale. Extract ratings, review text, and version data to perform sentiment analysis, identify recurring issues, and track feature requests. This empowers product managers to make informed development decisions, improve user satisfaction, and quickly address critical bugs reported by users.

Overview

About Best Boilerplates

Best Boilerplates is a strategic, enterprise-grade platform designed to optimize the foundational phase of software development for technical leaders, product teams, and growth-focused founders. It functions as a centralized, analytical hub for comparing high-quality SaaS boilerplates, full-stack starter kits, and production-ready application templates. The platform directly addresses the critical business challenge of reducing time-to-market and minimizing technical debt at project inception. By aggregating a meticulously vetted selection of tools across dominant tech stacks like Next.js, React, Node.js, Laravel, Django, and Flutter, it eliminates weeks of fragmented research and evaluation. Each listing provides a comprehensive, data-driven breakdown of essential attributes including pricing models, integrated features (e.g., Stripe, multi-tenancy), authentication frameworks, database architectures, and deployment workflows. This enables stakeholders to make capital-efficient decisions, selecting a scalable, feature-rich foundation that aligns with both immediate MVP goals and long-term growth trajectories. For organizations prioritizing developer productivity and accelerated ROI, Best Boilerplates transforms the selection process from a risky guesswork exercise into a streamlined, evidence-based procurement strategy.

About Crawlkit

CrawlKit is an enterprise-grade web data extraction platform engineered to transform the web into a structured, reliable API. It is specifically designed for developers, data engineers, and business intelligence teams who require scalable, high-fidelity access to web data without the operational overhead of building and maintaining complex scraping infrastructure. The platform directly addresses critical challenges in modern data collection, including anti-bot protections, IP blocking, JavaScript rendering, and rate limiting. By providing a unified API, CrawlKit abstracts these complexities, handling proxy rotation, headless browser automation, intelligent retries, and platform-specific parsing. This allows organizations to shift resources from data collection to core data analysis and application development, accelerating time-to-insight and improving ROI on data initiatives. Its core value proposition is delivering clean, structured data from any public website or platform—including LinkedIn, Instagram, and major app stores—with a single, consistent API call, enabling the rapid construction of powerful, production-ready data pipelines.

Frequently Asked Questions

Best Boilerplates FAQ

What is the primary value proposition of Best Boilerplates for a business?

The primary value is quantified time and capital savings. The platform consolidates what would typically be weeks of scattered research, trial-and-error testing, and technical evaluation into a single, efficient process. By enabling data-driven selection of an optimal, production-ready foundation, businesses significantly reduce their initial development timeline, lower the risk of technical missteps, and accelerate their path to a market-ready product, directly improving return on investment for development resources.

How does Best Boilerplates ensure the quality of its listed products?

Best Boilerplates employs a curated approach, aggregating and reviewing boilerplates based on a set of enterprise-grade criteria. This includes assessing the credibility of the creator, analyzing the completeness and architecture of the codebase, verifying the functionality of advertised integrations, and considering community feedback and adoption metrics. Listings include detailed breakdowns and transparent reviews to highlight both strengths and potential limitations, ensuring users have a realistic and comprehensive understanding.

Is Best Boilerplates suitable for non-technical founders or product managers?

Absolutely. While the content is technically detailed, the comparative format and clear categorization make it highly accessible. Non-technical stakeholders can use the platform to understand the landscape, compare key differentiators like cost and feature sets, and make informed budgetary and strategic decisions. It provides the necessary context to have productive, aligned discussions with their development team or agency about the foundational technology for their project.

Can I submit a boilerplate to be listed on Best Boilerplates?

Yes, the platform actively encourages submissions to maintain a comprehensive and current database. There is a clear "Add Boilerplate" call-to-action, inviting creators and vendors to submit their products for review. This ensures the marketplace remains dynamic and includes the latest and most innovative starter kits, providing continuous value to the developer and founder community seeking cutting-edge tools.

Crawlkit FAQ

What platforms and data sources does CrawlKit support?

CrawlKit supports a wide and growing range of data sources through dedicated API endpoints. This includes professional networks like LinkedIn (for profiles, companies, jobs), social media like Instagram (profiles, posts), search engines, and app stores (Play Store, App Store). The platform is continually expanding, and they encourage users to request new sources, often building custom APIs to meet specific enterprise needs.

How does CrawlKit handle websites with anti-bot protections?

CrawlKit's infrastructure is specifically engineered to bypass sophisticated anti-bot measures. It utilizes a global network of rotating residential proxies to mimic organic user traffic, employs full JavaScript rendering with headless browsers to load dynamic content, and implements advanced logic to solve common challenges like CAPTCHAs. This managed approach ensures high data retrieval success rates without requiring any custom engineering from your team.

What is your pricing model and are there any minimums?

CrawlKit uses a transparent, credit-based pricing model with no monthly subscriptions or minimum spend requirements. You purchase credits upfront, and each API call consumes a set number (e.g., 1 credit for an Instagram profile, 2 for a LinkedIn company). Credits never expire, and you receive refunds for failed requests. Volume discounts are available, making it cost-effective to scale your data operations.

How do I get started and is there a free tier?

You can start immediately by signing up for a free account, which includes 100 complimentary credits to test the API. Simply install the official SDK (e.g., via npm for Node.js) or use direct HTTP requests, configure your API key, and begin making calls to supported endpoints. The playground and comprehensive documentation allow you to experiment and integrate CrawlKit into your data pipelines within minutes.

Alternatives

Best Boilerplates Alternatives

Best Boilerplates is a specialized comparison platform within the tech tools category, designed to help developers and product teams evaluate SaaS boilerplates and full-stack starter kits. It aggregates detailed listings across major tech stacks to accelerate project launches by providing transparent data on features, integrations, and pricing. Users often seek alternatives due to specific platform requirements, budget constraints, or the need for a different feature set not covered by the available comparisons. Some may require a more niche tech stack analysis or a different depth of vendor information to meet their unique project specifications. When evaluating an alternative, prioritize a platform's comprehensiveness in covering your required technology frameworks, the clarity of its pricing and feature breakdowns, and the quality of its integration insights. The ideal tool should deliver a strong return on investment by drastically reducing research time and mitigating the risk of selecting an unsuitable foundation for your application.

Crawlkit Alternatives

CrawlKit is a sophisticated API platform in the web data extraction and analytics category, designed to automate the collection of public web data at scale. It removes the technical burden of proxy management, browser automation, and bypassing anti-bot measures, allowing data teams to focus on deriving actionable insights and ROI from the data itself. Businesses may evaluate alternatives to CrawlKit for several strategic reasons. These include budget constraints and pricing model alignment, specific feature requirements not covered by the core offering, or the need for a different deployment model such as on-premises software versus a managed API service. Platform integration capabilities and the level of required technical support are also key decision drivers. When assessing any alternative solution, enterprises should prioritize proven reliability and high success rates in data delivery, as downtime directly impacts data pipelines and decision-making. Scalability to handle increasing data volumes, robust security and compliance protocols for handling sensitive data, and the total cost of ownership beyond just subscription fees are critical evaluation metrics. The ideal platform should demonstrably increase team productivity by reducing maintenance overhead.