Fallom vs qtrl.ai

Side-by-side comparison to help you choose the right AI tool.

Fallom delivers real-time observability for LLMs, enhancing tracking, debugging, and cost management for AI operations.

Last updated: February 28, 2026

qtrl.ai

qtrl.ai scales QA with AI agents while ensuring full enterprise control and governance.

Last updated: March 4, 2026

Visual Comparison

Fallom

qtrl.ai

Feature Comparison

Fallom

Real-Time Observability

Fallom offers real-time observability for AI agents, allowing organizations to track tool calls, analyze execution timing, and debug performance issues with confidence. This transparency ensures that teams can identify bottlenecks and optimize workflows proactively.

Cost Attribution

With Fallom, businesses can track spending per model, user, and team, achieving full cost transparency for budgeting and chargeback purposes. This feature empowers organizations to manage their AI expenditures effectively and optimize resource allocation.

Compliance Ready

Fallom is equipped with comprehensive audit trails, ensuring organizations can meet regulatory requirements such as the EU AI Act, SOC 2, and GDPR. Features like input/output logging, model versioning, and user consent tracking help maintain compliance and support organizational accountability.

Session Tracking and Debugging

Fallom allows users to group traces by session, user, or customer, providing complete contextual visibility into interactions. This feature facilitates debugging and enhances the understanding of user behavior, enabling data-driven decision-making.

qtrl.ai

Enterprise-Grade Test Management

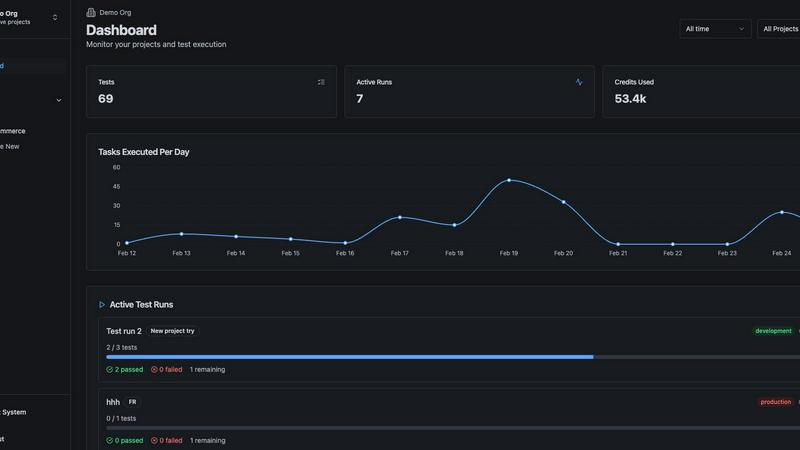

qtrl provides a centralized hub for all quality assurance activities, offering structured test case management, planning, and execution tracking. It ensures full traceability from requirements to test coverage, creating clear audit trails essential for compliance-driven environments. Teams can manage both manual and automated workflows within a single platform, providing engineering leads and QA managers with unparalleled visibility into testing status, pass/fail rates, and potential risk areas through real-time, customizable dashboards.

Progressive AI Automation & Autonomous Agents

Unlike all-or-nothing AI solutions, qtrl introduces intelligent automation progressively. Teams begin with human-written test instructions. When ready, they can leverage built-in autonomous AI agents that generate executable UI tests from plain English descriptions, maintain them as the application evolves, and run them at scale. This feature allows for a controlled adoption curve, where AI suggestions are fully reviewable and approvable, ensuring teams never lose oversight while significantly accelerating test creation and maintenance.

Governance by Design & Permissioned Autonomy

qtrl is built with enterprise trust and security as foundational principles. It offers configurable autonomy levels, ensuring AI agents operate strictly within user-defined rules and permissions. The platform provides full visibility into every agent action, eliminating "black-box" decisions. With enterprise-ready security, encrypted secrets management, and the guarantee that secrets are never exposed to AI agents, qtrl delivers the governance required for sensitive and regulated industries.

Adaptive Memory & Multi-Environment Execution

The platform's Adaptive Memory system builds a living, evolving knowledge base of your application by learning from every exploration, test execution, and resolved issue. This powers context-aware, smarter test generation over time. Coupled with robust multi-environment execution capabilities, teams can run tests across development, staging, and production environments with per-environment variables, ensuring consistency and reliability throughout the CI/CD pipeline.

Use Cases

Fallom

Enhancing Debugging Processes

Organizations can leverage Fallom to streamline their debugging processes by tracing every function call, argument, and result in their agents. This visibility reduces the time spent on identifying and resolving issues within LLM workflows.

Budget Management and Cost Control

Fallom's cost attribution feature is ideal for finance teams that need to monitor and control AI-related expenditures. By tracking costs associated with each model and user, businesses can make informed decisions about their AI investments.

Regulatory Compliance Management

For businesses operating in regulated industries, Fallom provides the necessary tools to maintain compliance with audit trails and privacy controls. This ensures that organizations can operate confidently while adhering to stringent legal requirements.

Performance Evaluation and Optimization

Fallom enables organizations to run evaluations on LLM outputs, allowing teams to catch regressions before production. This capability helps maintain high-quality outputs and optimizes the overall performance of AI models.

qtrl.ai

Scaling QA Beyond Manual Testing

For teams overwhelmed by repetitive manual test cycles, qtrl provides a structured path to automation. Teams can start by documenting manual test cases in the platform, then progressively use AI agents to automate the most time-consuming scripts. This use case directly translates to a measurable ROI by freeing QA personnel for higher-value exploratory testing and reducing time-to-market for new features without a steep initial learning curve.

Modernizing Legacy QA Workflows

Organizations reliant on outdated, siloed, or script-heavy automation frameworks can use qtrl to consolidate and modernize their entire QA process. The platform integrates with existing tools and requirements management systems, bringing test management, automation, and execution into a single, governed environment. This streamlines workflows, reduces maintenance costs of brittle scripts, and establishes a scalable foundation for continuous quality improvement.

Ensuring Governance in Regulated Enterprises

For enterprises in finance, healthcare, or government requiring strict compliance, audit trails, and change control, qtrl's governance-by-design approach is critical. The platform ensures full traceability for every requirement, test, and result, with permissioned autonomy that keeps AI actions accountable. This use case enables these organizations to leverage AI for productivity gains while fully meeting internal and external regulatory audit requirements.

Empowering Product-Led Engineering Teams

Product-led growth teams that deploy frequently need rapid, reliable quality feedback. qtrl integrates seamlessly into CI/CD pipelines, providing continuous quality feedback loops. Autonomous agents can be triggered on-demand to validate new builds or user journeys, ensuring that rapid iteration does not compromise software quality. This accelerates release velocity while providing engineering leads with confidence in each deployment.

Overview

About Fallom

Fallom is a cutting-edge AI-native observability platform specifically designed to enhance the performance and management of large language models (LLMs) and agent workloads. By offering organizations unparalleled visibility into every LLM call made in production, Fallom enables comprehensive end-to-end tracing that includes critical components such as prompts, outputs, tool calls, tokens, latency, and cost per call. This platform is tailored for businesses requiring robust observability tools to navigate the complexities of AI operations, ensuring that teams can monitor usage in real-time, debug issues swiftly, and effectively allocate spending across various models, users, and teams. Additionally, Fallom provides essential session, user, and customer-level context, which is particularly vital for organizations operating in regulated environments. This includes delivering enterprise-ready audit trails, logging, model versioning, and consent tracking to meet compliance standards. With a single OpenTelemetry-native SDK, teams can instrument their applications within minutes, making Fallom an indispensable tool for organizations aiming to boost their LLM operational efficiency and compliance readiness.

About qtrl.ai

qtrl.ai is an enterprise-grade AI-powered QA platform engineered to help software development teams scale their quality assurance operations while maintaining rigorous control and governance. It addresses the critical industry dilemma of choosing between the slow, unscalable nature of manual testing and the brittle, high-maintenance complexity of traditional test automation. qtrl provides a unified solution by combining robust, centralized test management with a progressive, trustworthy layer of AI automation. This platform serves as a single source of truth for organizing test cases, planning test runs, tracing requirements to coverage, and tracking real-time quality metrics through comprehensive dashboards. Its core value proposition is delivering a trusted path to faster release cycles and higher-quality software, making it ideal for product-led engineering teams, QA groups transitioning from manual processes, organizations modernizing legacy workflows, and enterprises with strict compliance and auditability requirements. qtrl's mission is to bridge the gap between control and speed, enabling teams to incrementally adopt intelligent automation without the risks associated with opaque, "black-box" AI solutions.

Frequently Asked Questions

Fallom FAQ

What is Fallom used for?

Fallom is used for enhancing visibility and observability in large language model operations, enabling organizations to monitor, debug, and optimize AI workloads effectively.

How does Fallom ensure compliance with regulations?

Fallom ensures compliance by providing comprehensive audit trails, input/output logging, model versioning, and user consent tracking, making it suitable for organizations in regulated industries.

Can Fallom integrate with existing AI infrastructures?

Yes, Fallom utilizes a single OpenTelemetry-native SDK that is compatible with various AI providers, ensuring seamless integration into existing systems without vendor lock-in.

How quickly can teams get started with Fallom?

Teams can set up Fallom in under five minutes, allowing organizations to start tracing and monitoring their AI agents almost immediately, boosting productivity from the outset.

qtrl.ai FAQ

How does qtrl.ai ensure the AI doesn't make unpredictable changes?

qtrl is built on a principle of "permissioned autonomy." AI agents do not make changes autonomously; they operate within strictly defined rules and levels of access set by the team. All AI-generated tests or modifications are presented as suggestions for human review and approval. This governance layer, combined with full visibility into every agent action, ensures predictability and maintains human-in-the-loop control at all times.

Can qtrl.ai integrate with our existing development and project management tools?

Yes, qtrl is designed for real-world workflows and offers built-in integrations for seamless operation within your existing tech stack. It supports requirements management integration, CI/CD pipeline tools, and other essential development software. This allows teams to maintain their current processes while centralizing and enhancing their QA activities within the qtrl platform, avoiding disruptive changes to established workflows.

Is qtrl.ai suitable for teams with no prior test automation experience?

Absolutely. qtrl is specifically designed for progressive adoption, making it an ideal starting point for teams new to automation. You can begin by using the platform solely for manual test management and collaboration. As the team becomes comfortable, you can leverage features like AI test generation from plain English, which lowers the technical barrier to entry and allows you to scale automation efforts at your own pace.

How does qtrl.ai handle sensitive data and security during testing?

Security is a cornerstone of qtrl's enterprise design. The platform supports per-environment variables and encrypted secrets for managing sensitive data like credentials and API keys. Crucially, these secrets are never exposed to the AI agents during test execution. qtrl also adheres to enterprise-grade security standards and offers detailed data processing agreements, making it a trustworthy choice for organizations with stringent security and privacy requirements.

Alternatives

Fallom Alternatives

Fallom is an advanced AI-native observability platform specifically designed for large language models (LLM) and agent workloads. It provides real-time visibility into LLM operations, enabling organizations to track, debug, and manage costs efficiently across their AI initiatives. As businesses increasingly adopt AI technologies, users often seek alternatives to Fallom for various reasons, including pricing structure, specific feature sets, or the need for integration with existing platforms. When evaluating alternatives, it is essential to consider factors such as functionality, ease of use, compliance capabilities, and overall return on investment to ensure the chosen solution meets organizational needs. In the rapidly evolving landscape of AI observability, organizations may find that their requirements change over time. Users often look for alternatives that offer enhanced features, better scalability, or improved cost management tools. It’s crucial to assess the compatibility of the alternative with current workflows and to ensure that it can provide the necessary insights and data for effective decision-making. A thorough evaluation of potential alternatives can help businesses maintain operational efficiency and compliance as they navigate the complexities of AI deployment.

qtrl.ai Alternatives

qtrl.ai is a modern QA and test automation platform designed for software engineering teams. It combines structured test management with intelligent AI agents to help teams scale their testing efforts while maintaining full governance and control over the process. This positions it within the broader categories of test automation and developer tools. Users often evaluate alternatives for several strategic reasons. These can include budget constraints, the need for specific niche features not covered by a general platform, or integration requirements with an existing toolchain. Some organizations may also prioritize different aspects, such as a heavier focus on open-source frameworks or a desire for a more developer-centric coding environment over a managed platform. When assessing alternatives, key considerations should align with core business objectives. Evaluate the total cost of ownership, not just licensing fees. Scrutinize the platform's approach to AI—whether it's transparent and governable or a black box. Finally, ensure it provides the necessary enterprise capabilities for security, compliance, and seamless integration into your development lifecycle to truly accelerate release velocity without introducing risk.