Agenta vs Fallom

Side-by-side comparison to help you choose the right AI tool.

Agenta centralizes LLMOps to accelerate reliable AI development and boost team productivity.

Last updated: March 1, 2026

Fallom delivers real-time observability for LLMs, enhancing tracking, debugging, and cost management for AI operations.

Last updated: February 28, 2026

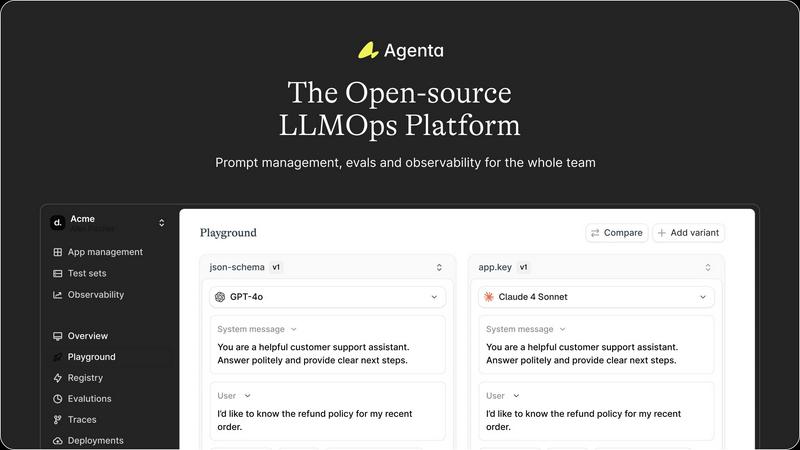

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Unified Playground & Version Control

Agenta provides a centralized playground where teams can experiment with different prompts, parameters, and foundation models from various providers in a side-by-side comparison view. This model-agnostic approach prevents vendor lock-in. Every iteration is automatically versioned, creating a complete audit trail of changes. This feature eliminates the chaos of managing prompts across disparate documents and ensures that any experiment or production configuration can be precisely tracked, replicated, or rolled back, fostering disciplined experimentation.

Automated & Human-in-the-Loop Evaluation

The platform replaces subjective "vibe testing" with a systematic evaluation framework. Teams can integrate LLM-as-a-judge evaluators, custom code, or built-in metrics to automatically assess performance. Crucially, Agenta supports full-trace evaluation for complex agents, testing each reasoning step, not just the final output. It seamlessly incorporates human feedback from domain experts into the evaluation workflow, turning qualitative insights into quantitative evidence for decision-making before any deployment.

Production Observability & Debugging

Agenta offers comprehensive observability by tracing every LLM application request in production. This allows teams to pinpoint exact failure points in complex chains or agentic workflows. Any problematic trace can be instantly annotated by the team or flagged by users and converted into a test case with a single click, closing the feedback loop. Live monitoring and online evaluations help detect performance regressions in real-time, ensuring system reliability.

Cross-Functional Collaboration Hub

Agenta breaks down silos by providing tailored interfaces for every team member. Domain experts can safely edit and experiment with prompts through a dedicated UI without writing code. Product managers and experts can directly run evaluations and compare experiments. With full parity between its API and UI, Agenta integrates both programmatic and manual workflows into one central hub, aligning technical and business stakeholders on a unified LLMOps process.

Fallom

Real-Time Observability

Fallom offers real-time observability for AI agents, allowing organizations to track tool calls, analyze execution timing, and debug performance issues with confidence. This transparency ensures that teams can identify bottlenecks and optimize workflows proactively.

Cost Attribution

With Fallom, businesses can track spending per model, user, and team, achieving full cost transparency for budgeting and chargeback purposes. This feature empowers organizations to manage their AI expenditures effectively and optimize resource allocation.

Compliance Ready

Fallom is equipped with comprehensive audit trails, ensuring organizations can meet regulatory requirements such as the EU AI Act, SOC 2, and GDPR. Features like input/output logging, model versioning, and user consent tracking help maintain compliance and support organizational accountability.

Session Tracking and Debugging

Fallom allows users to group traces by session, user, or customer, providing complete contextual visibility into interactions. This feature facilitates debugging and enhances the understanding of user behavior, enabling data-driven decision-making.

Use Cases

Agenta

Streamlining Enterprise Chatbot Development

Development teams building customer-facing or internal support chatbots use Agenta to manage hundreds of prompt variations for different intents and scenarios. Product managers and subject matter experts collaborate directly in the platform to refine responses based on real user interactions. Automated evaluations against quality and safety test sets ensure each new prompt version is an improvement before being promoted, drastically reducing rollout cycles and improving answer consistency.

Building and Auditing Complex AI Agents

For teams developing multi-step AI agents involving reasoning, tool use, and retrieval, Agenta is critical for debugging and evaluation. The full-trace observability allows engineers to see exactly where in an agent's chain a failure occurred. They can save these errors as test cases and use the playground to iteratively fix issues. Systematic evaluation of each intermediate step ensures the entire agentic workflow is robust, not just its individual components.

Managing LLM Application Lifecycle for Product Teams

Cross-functional product teams use Agenta as their central LLM lifecycle management platform. From the initial prompt experimentation phase, through rigorous evaluation with business-defined metrics, to post-deployment monitoring, all activities are coordinated in one system. This end-to-end visibility enables data-driven decisions, ensures compliance with internal standards, and provides a clear audit trail for all changes made to the AI application.

Rapid Prototyping and A/B Testing LLM Features

When integrating new LLM-powered features into an existing product, Agenta accelerates the prototyping phase. Developers can quickly test different models and prompts using the unified playground. Teams can then design and run scalable A/B tests (online evaluations) directly within Agenta, comparing the performance of different experimental variants in a live environment with real user data to determine the optimal configuration with statistical confidence.

Fallom

Enhancing Debugging Processes

Organizations can leverage Fallom to streamline their debugging processes by tracing every function call, argument, and result in their agents. This visibility reduces the time spent on identifying and resolving issues within LLM workflows.

Budget Management and Cost Control

Fallom's cost attribution feature is ideal for finance teams that need to monitor and control AI-related expenditures. By tracking costs associated with each model and user, businesses can make informed decisions about their AI investments.

Regulatory Compliance Management

For businesses operating in regulated industries, Fallom provides the necessary tools to maintain compliance with audit trails and privacy controls. This ensures that organizations can operate confidently while adhering to stringent legal requirements.

Performance Evaluation and Optimization

Fallom enables organizations to run evaluations on LLM outputs, allowing teams to catch regressions before production. This capability helps maintain high-quality outputs and optimizes the overall performance of AI models.

Overview

About Agenta

Agenta is an enterprise-grade, open-source LLMOps platform engineered to solve the critical organizational and technical challenges faced by AI development teams building with large language models. In a landscape where LLMs are inherently unpredictable, Agenta provides the essential infrastructure to transform chaotic, error-prone workflows into structured, reliable, and collaborative processes. The platform serves as a single source of truth for cross-functional teams, including developers, product managers, and domain experts, enabling them to centralize prompt management, conduct systematic evaluations, and gain full observability into their AI systems. By integrating these capabilities into one cohesive environment, Agenta directly addresses the inefficiencies of scattered prompts across communication tools and siloed team efforts. The core value proposition is clear: empower organizations to ship high-quality, reliable LLM applications faster by minimizing guesswork, reducing debugging time, and providing the evidence-based framework needed for continuous improvement and confident deployment.

About Fallom

Fallom is a cutting-edge AI-native observability platform specifically designed to enhance the performance and management of large language models (LLMs) and agent workloads. By offering organizations unparalleled visibility into every LLM call made in production, Fallom enables comprehensive end-to-end tracing that includes critical components such as prompts, outputs, tool calls, tokens, latency, and cost per call. This platform is tailored for businesses requiring robust observability tools to navigate the complexities of AI operations, ensuring that teams can monitor usage in real-time, debug issues swiftly, and effectively allocate spending across various models, users, and teams. Additionally, Fallom provides essential session, user, and customer-level context, which is particularly vital for organizations operating in regulated environments. This includes delivering enterprise-ready audit trails, logging, model versioning, and consent tracking to meet compliance standards. With a single OpenTelemetry-native SDK, teams can instrument their applications within minutes, making Fallom an indispensable tool for organizations aiming to boost their LLM operational efficiency and compliance readiness.

Frequently Asked Questions

Agenta FAQ

Is Agenta truly model and framework agnostic?

Yes, Agenta is designed to be fully agnostic. It seamlessly integrates with any major LLM provider (OpenAI, Anthropic, Cohere, open-source models, etc.) and supports popular development frameworks like LangChain and LlamaIndex. This architecture prevents vendor lock-in, allowing your team to use the best model for each specific task and switch providers as needed without overhauling your entire MLOps pipeline.

How does Agenta facilitate collaboration with non-technical team members?

Agenta provides specialized user interfaces that empower product managers and domain experts. These stakeholders can directly access the playground to edit prompts, create evaluation test sets from production errors, and run comparison experiments—all without writing or interacting with code. This bridges the gap between technical implementation and business expertise, ensuring the AI product is shaped by those who understand the domain best.

Can we use our own custom metrics and evaluators?

Absolutely. While Agenta offers built-in evaluators and supports the LLM-as-a-judge pattern, it is built for extensibility. Teams can integrate their own custom code evaluators to implement proprietary business logic, compliance checks, or domain-specific quality metrics. This flexibility ensures your evaluation suite measures what truly matters for your specific application and success criteria.

How does the observability feature aid in debugging complex failures?

Agenta captures the complete trace of every LLM call, including inputs, outputs, intermediate steps, and tool executions in an agentic workflow. When a failure occurs, developers are not left guessing; they can drill down into the exact step where the error originated. This granular visibility transforms debugging from a time-consuming investigation into a precise and efficient process, significantly reducing mean time to resolution (MTTR).

Fallom FAQ

What is Fallom used for?

Fallom is used for enhancing visibility and observability in large language model operations, enabling organizations to monitor, debug, and optimize AI workloads effectively.

How does Fallom ensure compliance with regulations?

Fallom ensures compliance by providing comprehensive audit trails, input/output logging, model versioning, and user consent tracking, making it suitable for organizations in regulated industries.

Can Fallom integrate with existing AI infrastructures?

Yes, Fallom utilizes a single OpenTelemetry-native SDK that is compatible with various AI providers, ensuring seamless integration into existing systems without vendor lock-in.

How quickly can teams get started with Fallom?

Teams can set up Fallom in under five minutes, allowing organizations to start tracing and monitoring their AI agents almost immediately, boosting productivity from the outset.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to centralize and streamline the development of reliable large language model applications. It falls within the development and operations category, specifically addressing the collaborative workflows needed for prompt engineering, evaluation, and debugging in enterprise AI projects. Teams often evaluate alternatives to Agenta for various strategic reasons. These can include specific budget constraints, the need for different feature integrations, or platform requirements such as on-premise deployment versus a managed service. The search for a different tool is a standard part of the procurement process to ensure the selected solution aligns perfectly with an organization's technical stack and operational maturity. When assessing any LLMOps alternative, key considerations should include the platform's ability to enhance team productivity and provide a clear return on investment. Look for robust capabilities in centralized prompt management, automated evaluation frameworks, and comprehensive observability. The ideal solution should transform chaotic, ad-hoc processes into a structured, collaborative, and data-driven workflow that accelerates time-to-market for AI applications while minimizing development risks.

Fallom Alternatives

Fallom is an advanced AI-native observability platform specifically designed for large language models (LLM) and agent workloads. It provides real-time visibility into LLM operations, enabling organizations to track, debug, and manage costs efficiently across their AI initiatives. As businesses increasingly adopt AI technologies, users often seek alternatives to Fallom for various reasons, including pricing structure, specific feature sets, or the need for integration with existing platforms. When evaluating alternatives, it is essential to consider factors such as functionality, ease of use, compliance capabilities, and overall return on investment to ensure the chosen solution meets organizational needs. In the rapidly evolving landscape of AI observability, organizations may find that their requirements change over time. Users often look for alternatives that offer enhanced features, better scalability, or improved cost management tools. It’s crucial to assess the compatibility of the alternative with current workflows and to ensure that it can provide the necessary insights and data for effective decision-making. A thorough evaluation of potential alternatives can help businesses maintain operational efficiency and compliance as they navigate the complexities of AI deployment.