Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

Hostim.dev

Hostim.dev deploys Docker apps with managed databases on EU infrastructure for predictable, compliant hosting.

Last updated: March 1, 2026

OpenMark AI enables you to benchmark 100+ LLMs for cost, speed, quality, and stability tailored to your specific tasks in minutes.

Last updated: March 26, 2026

Visual Comparison

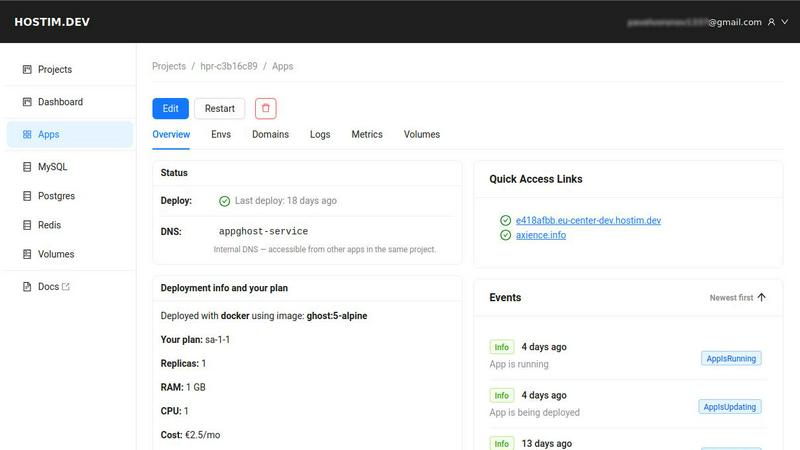

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Simplified Docker & Compose Deployment

Deploy full application stacks in minutes by simply pasting a Docker Compose file, pushing to a Git repository, or specifying a Docker image. This feature completely removes the need for complex YAML configuration or deep Kubernetes knowledge, reducing initial deployment setup time from hours to mere minutes and enabling rapid iteration and continuous delivery.

Built-in Managed Databases & Persistent Storage

Hostim.dev automatically provisions and seamlessly wires managed database instances (MySQL, PostgreSQL, Redis) and persistent volumes directly into your project's isolated environment. This eliminates the manual setup, security configuration, and ongoing maintenance of stateful services, ensuring high performance and data durability without operational burden.

EU Bare-Metal Hosting with GDPR Compliance

Applications are hosted on dedicated bare-metal servers within Germany, ensuring full GDPR compliance by default. This provides superior performance, data sovereignty, and regulatory peace of mind for businesses serving European customers, avoiding the latency and legal complexities associated with non-EU cloud providers.

Project-Centric Isolation & Transparent Billing

Each project runs in its own securely isolated Kubernetes namespace with automatic HTTPS, live metrics, and logging. Coupled with per-project cost tracking and transparent, flat-rate pricing, this allows for precise budgeting, clean client handovers, and accurate cost attribution, eliminating unexpected bills and financial overhead.

OpenMark AI

Task Description in Plain Language

OpenMark AI allows users to define their benchmarking tasks using natural language. This feature simplifies the process of specifying complex requirements, making it accessible for teams without deep technical expertise. Users can easily describe tasks across various domains, including classification, translation, and data extraction, ensuring that all relevant models are evaluated against the same criteria.

Real-Time API Call Comparisons

The platform provides side-by-side results from actual API calls to multiple AI models, rather than relying on cached or marketing data. This ensures that users receive accurate, real-time performance metrics, allowing for a more reliable assessment of how each model performs under the same conditions. By testing in real-time, teams can identify which models offer the best results for their specific tasks.

Cost Efficiency Analysis

OpenMark AI emphasizes cost efficiency by allowing users to compare the actual costs associated with each API call. This feature helps teams understand the financial implications of using different models, enabling them to make data-driven decisions that balance quality and expense. It is particularly beneficial for organizations that prioritize ROI in their AI investments.

Consistency and Stability Metrics

With OpenMark AI, users can evaluate model consistency by running the same task multiple times and analyzing the stability of outputs. This feature is critical for applications where reliability and repeatability are paramount, ensuring that teams can select models that deliver consistent performance across various scenarios.

Use Cases

Hostim.dev

Accelerating Startup MVP Launches

Startups can bypass costly and time-intensive infrastructure setup to deploy their minimum viable product (MVP) within a single day. By leveraging built-in databases and one-click deployments, small teams can validate market fit and iterate quickly, conserving capital and accelerating their path to revenue without technical debt.

Streamlining Agency Client Projects

Digital agencies can deploy and manage isolated application environments for each client directly from design specs or Docker Compose. Per-project billing provides clear, quotable costs for clients, while secure isolation and easy handover capabilities simplify project lifecycle management and improve profitability.

Enabling Enterprise Proof-of-Concepts

Enterprise development teams can rapidly spin up secure, compliant environments for testing new microservices, internal tools, or architecture prototypes. The platform's isolation and EU hosting meet corporate IT and compliance standards, allowing for risk-free innovation and faster decision-making cycles.

Facilitating Educational & Portfolio Development

Students and developers building portfolio projects gain access to real, production-like infrastructure with managed databases and deployment workflows. This practical experience with industry-standard tools accelerates learning and creates demonstrable work, enhancing employability without the cost barrier of traditional cloud platforms.

OpenMark AI

Model Selection for AI Features

OpenMark AI is invaluable for product teams tasked with selecting the right AI model for new features. By benchmarking multiple models against specific tasks, teams can identify which model aligns best with their goals, enhancing the quality of the final product.

Performance Validation

Developers can use OpenMark AI to validate the performance of models before deployment. By testing models under real-world conditions, teams can gain confidence in their choices, mitigating the risk of subpar performance after launch.

Cost Analysis for Budget Planning

Organizations can leverage OpenMark AI to perform detailed cost analyses of different AI models. This allows for more strategic budget planning, ensuring that AI expenditures are aligned with expected business outcomes and helping teams optimize their spending.

Research and Development

In R&D scenarios, OpenMark AI facilitates the exploration of new AI models and techniques. Researchers can quickly benchmark cutting-edge models against established ones, fostering innovation by identifying promising candidates for further development.

Overview

About Hostim.dev

Hostim.dev is an enterprise-grade, bare-metal Platform-as-a-Service (PaaS) engineered to eliminate DevOps overhead and accelerate time-to-market for containerized applications. It provides a streamlined, production-ready environment where developers can deploy directly from Docker images, Git repositories, or comprehensive Docker Compose files in minutes, not days. The platform automatically provisions and integrates essential managed services—including MySQL, PostgreSQL, Redis, and persistent storage—within a secure, isolated network per project. By abstracting the complexities of Kubernetes and infrastructure management, Hostim.dev delivers a significant return on investment by allowing engineering teams to refocus over 90% of their time from infrastructure maintenance to core product development and feature innovation. With transparent, per-project hourly billing starting at €2.5/month and GDPR-compliant hosting on EU bare-metal in Germany, it offers the control, predictability, and compliance required by serious businesses, startups, digital agencies, and SaaS companies prioritizing operational simplicity and financial transparency.

About OpenMark AI

OpenMark AI is a sophisticated web application designed for task-level benchmarking of large language models (LLMs). It empowers developers and product teams to evaluate and validate the performance of various AI models before integrating them into their applications. By allowing users to describe their testing requirements in plain language, OpenMark AI streamlines the benchmarking process, enabling side-by-side comparisons of model outputs based on real API calls. The platform focuses on critical metrics such as cost per request, response latency, scored quality, and output stability across repeated tasks. This comprehensive approach ensures teams make informed decisions based on variance in model performance rather than relying on potentially misleading or cached marketing claims. OpenMark AI is ideal for organizations seeking to optimize their AI workflows, ensuring they select the most appropriate model tailored to their specific tasks while maximizing cost efficiency. With a user-friendly interface and a large catalog of supported models, OpenMark AI makes it easy to benchmark and choose the right AI tools for deployment.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

The platform offers a 5-day free trial project with no credit card required. This trial includes full access to deployment features (Docker, Git, Compose), built-in databases, persistent storage, automatic HTTPS, and project isolation. It is designed for complete evaluation of the platform's capabilities with your actual application stack.

Can I deploy with just a Docker Compose file?

Absolutely. Hostim.dev is built to accept a standard Docker Compose file as the primary deployment method. You can paste your docker-compose.yml directly into the dashboard, and the platform will automatically provision all defined services, networks, and volumes, launching your full multi-container application in minutes.

Where is my application hosted?

All applications on Hostim.dev are hosted on dedicated bare-metal servers in a secure, Tier III+ data center located in Germany. This ensures full GDPR compliance, superior performance through dedicated resources, and guarantees that all data remains within the European Union.

Do I need to know Kubernetes to use Hostim.dev?

No Kubernetes knowledge is required. Hostim.dev uses Kubernetes as a robust underlying orchestration layer but completely abstracts its complexity through a simplified developer interface. You interact only with Docker, Git, or Compose concepts, allowing you to benefit from Kubernetes' power without its operational learning curve.

OpenMark AI FAQ

What types of tasks can I benchmark with OpenMark AI?

OpenMark AI supports a wide range of tasks including classification, translation, data extraction, research, Q&A, and more. Users can describe any task they wish to evaluate, making it flexible and adaptable to various needs.

Do I need API keys to use OpenMark AI?

No, OpenMark AI eliminates the need for users to configure separate API keys for different models. The platform handles all necessary API calls within its environment, simplifying the benchmarking process.

How is cost efficiency measured in OpenMark AI?

Cost efficiency is measured by comparing the actual costs of API calls against the quality of the outputs generated. OpenMark AI provides detailed insights into how much each request costs, enabling users to make informed, financially sound decisions.

Can I save my benchmarking tasks for later use?

Yes, OpenMark AI allows users to save their benchmarking tasks for future reference. This feature enables teams to revisit and compare their results over time, ensuring continuous optimization of their AI model selection process.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a specialized bare-metal Platform-as-a-Service (PaaS) designed to streamline the deployment of containerized applications. It eliminates DevOps overhead by offering one-click deployments from Docker or Git, integrated databases, and secure, EU-hosted infrastructure. This positions it as a powerful tool for developers and businesses seeking operational simplicity and predictable costs. Users may explore alternatives for various strategic reasons. These can include specific geographic hosting requirements outside the EU, the need for different pricing models like long-term commitments over transparent hourly billing, or a demand for a broader set of built-in services beyond MySQL, PostgreSQL, and Redis. Platform scalability and specific compliance certifications can also drive the evaluation of other solutions. When assessing an alternative platform, key considerations should align with business objectives. Evaluate the total cost of ownership, including hidden fees for data transfer or support. Scrutinize the deployment workflow's efficiency and its impact on developer productivity. Finally, ensure the platform's security posture, compliance standards, and reliability metrics meet your enterprise's risk management and operational continuity requirements.

OpenMark AI Alternatives

OpenMark AI is a web application designed for task-level benchmarking of large language models (LLMs). It allows developers and product teams to evaluate over 100 models based on cost, speed, quality, and stability during a single session without the hassle of managing multiple API keys. Users typically seek alternatives to OpenMark AI for a variety of reasons, including pricing considerations, feature sets, or specific platform requirements. When selecting an alternative, it's essential to assess the comprehensiveness of the benchmarking capabilities, ease of use, and the relevance of the models supported.